It’s just over 2 weeks since I launched a practical toolkit for assessing ethics and governance of Artificial Intelligence / Machine Learning systems, and automated decision making systems – UnBias AI For Decision Makers (AI4DM). It has initiated a series of discussions with friends, colleagues and strangers as well as feedback from others. This has prompted me to explain a bit more about its genesis and the benefits I believe it offers to people and organisations who use it.

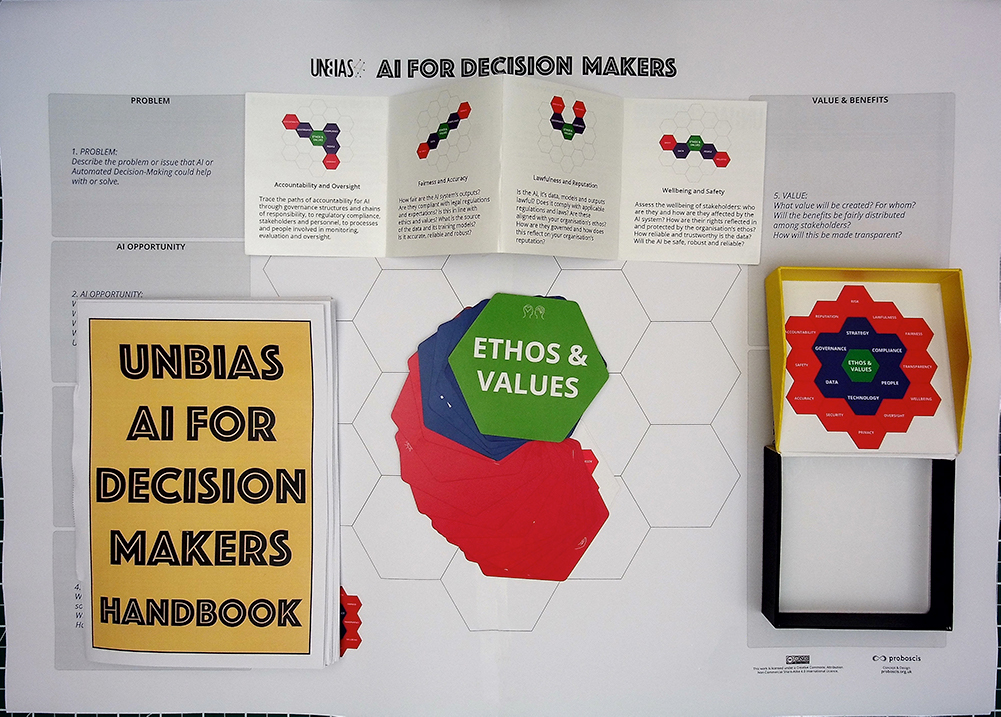

UnBias AI4DM is a critical thinking toolkit for bringing together people from across an organisation or group to anticipate and assess – through a rigorous process of questioning and a whole systems approach – the potential harms and consequences that could arise from developing and deploying automated decision making systems (especially those utilising AI or ML). It can also be used to evaluate existing systems and make sure that they are in alignment with the organisation’s core mission, vision, values and ethics.

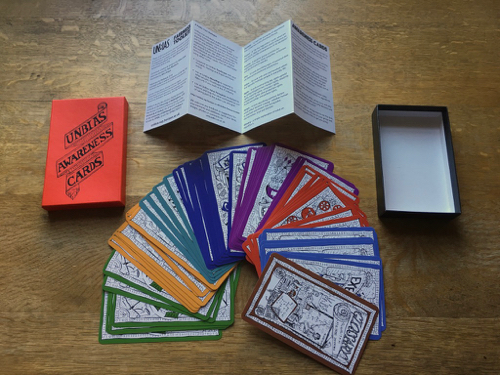

It is an engagement tool (rather than software) that fosters participation and communication across diverse disciplines – the cards and prompts provide a framework for discussions; the worksheet provides a structured method for capturing insights, observations and resulting actions; the handbook provides ideas and suggestions for running workshops etc. I am also creating and sharing instructional animations and videos throughout the crowdfunding campaign – raising funds to make a widely affordable production version.

AI4DM builds on a previous toolkit I created – UnBias Fairness Toolkit – released in 2018 that is aimed at building awareness (in young people and non-experts) of bias, unfairness & untrustworthy effects in algorithmic systems. Used together, the two toolkits enable such systems to be explored from both the perspectives of those creating them, and those on the receiving end of their outcomes. It is available in both a free downloadable DIY print-at-home version and a soon-to-be-manufactured physical production set.

Benefits of Using AI4DM

The toolkit has two key strands of benefits for groups and organisations who adopt and use it:

Addressing Issues:

- a structured process for identifying problems and devising solutions

- emphasises collective obligations and responsibilities

- a means of addressing complex challenges

- a way to anticipate future impacts (e.g. new legislation & regulations or shifts in public opinion)

Staff Development:

- develops communications skills across different disciplines fields and sectors

- supports team building and cohesion

- develops understanding of roles in teams and group work

- evolves a culture of shared practices across different disciplines and fields within an organisation

AI4DM as a Teaching Aid

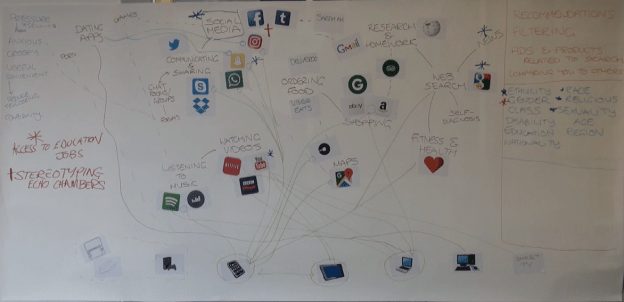

I have also been having discussions with academic colleagues who teach in various disciplines at a number of universities about using the toolkit in teaching and lecturing. As with the UnBias Fairness Toolkit, AI4DM provides a structured framework for looking at a wide range of issues from multiple perspectives and exploring how they align or misalign with an organisation’s core ethos and values. It can be used practically to look at a real world example or as a conceptual exercise to explore the potential for harmful consequences to emerge from a development process that doesn’t integrate ethical analysis alongside other key considerations, such as legal or health and safety regulations.

I’ll be adding a short animation to demonstrate using the toolkit in teaching settings soon on our Youtube Playlist.

Using the Toolkit Online

The pandemic has forced everyone to rapidly shift from in-person meetings, workshops, classes and lectures to distributed engagement via online platforms. So I have been experimenting with ways to use the toolkit in such spaces – from making a test interactive version using the MURAL online whiteboard collaboration tool, to tests using Zoom and a hybrid combination of physical cards and online annotation of the Worksheet. I’ll be adding a short animation soon to the Playlist to demonstrate using the toolkit online.

In summary, I believe that the best way to use the toolkit online is in a hybrid fashion:

- Make sure ALL the participants have their own physical set of cards (either a production set or DIY print-at-home version). In the long gaps between speaking and direct participation (a familiar feature of online situations) this gives the participants something tangible that they can contemplate and play with that is contextually relevant – given the many distractions of working/studying from home that are usually absent (or less intrusive) in traditional meeting or teaching spaces. I remain convinced that there is a positive cognitive impact of combining manual activities with critical thinking – it grounds ideas in ways that seem to promote associative connections; and may be similar to the effect identified in recent studies on the differences in learning outcomes when writing notes versus typing during lectures;

- Assign specific roles or topics to participants so that they can focus on their contribution to the session whilst listening to others;

- Have a Facilitator or Moderator ‘host’ the session and manage who is participating or speaking at any one time;

- The Issue Holder (who is first among equals) should keep the key issue or problem being addressed at the forefront of the conversation and make connections to the contributions of other participants;

- The Scribe should annotate the Worksheet on a Shared Screen (perhaps using an online collaboration tool such as Miro or MURAL, or simply annotating the PDF) with observations and notes, and post photos of the evolving matrix of cards as they are added.

- Use the Chat area to encourage participants to add their own annotations, ideas, links and other relevant observations to the session, assisting the Scribe in capturing as much of the richness of the conversation and discussions as possible.

Genesis

The UnBias Fairness Toolkit was an output of the UnBias project led by the Horizon Institute at the University of Nottingham with the Universities of Oxford and Edinburgh and Proboscis (and funded by the EPSRC). It was designed to accommodate future extensions and iterations so that it could be made relevant to specific groups of people (such as age groups or communities of interest) or targeted around particular issues and contexts (banking and finance; health data; education; transportation and tracking etc).

At the techUK Digital Ethics Summit in December 2019 I ran into Ansgar Koene, one of my former UnBias project colleagues who is a Senior Research Fellow at Horizon and also Global AI & Regulatory Leader at EY Global Services. Ansgar proposed developing an extension to the UnBias Fairness Toolkit aimed at helping people inside corporations and public organisations get to grips with AI ethics and governance issues in a practical and tangible way. Over the next few months this became a formal commission from EY to devise a prototype, which then evolved over the summer of 2020 into the full companion toolkit, UnBias AI For Decision Makers.

Support Our Crowdfunding Campaign

You can back our Indiegogo campaign to create a widely affordable production run of the toolkit – perks include the AI4DM toolkit itself at £25 (+ p&p) reduced from its retail price of £40, as well as the original UnBias Fairness Toolkit at £40 (+ p&p) reduced from its retail price of £60. Perks also include a Combo Pack of both toolkits at £60 (+ p&p) as well as multiples of each (with big savings). And I am also offering a perk with 50% off a dedicated one-to-one Facilitator Training Package with myself (2 x 1.5 hour video meetings + toolkits + personalised facilitator guide) at £360 instead of £720.

The campaign ends on 15th October 2020 – back it now to ensure you get your set!

You must be logged in to post a comment.